Marginally Interesting - The Newsletter - Issue #2

Welcome to Issue #2! Today also marks the day where I complete 6 months of working as an independent consultant. It has been quite a ride, and it has been different from what I have expected, but in a good way. More on that some other time.

For the second issue, I've collected some articles from three areas, MLOps, the EU's proposal to regulate the use of AI, and some follow up thoughts on writing software at scale.

I hope you'll find it interesting. If you want you can reply to this newsletter and let me know what you think!

MLOps becoming standardized?

I have to be honest, when MLOps started many of the products seemed to me as if they're either just relabeling whatever they had found for devops (CI/CD, pipelines) and market it to ML people, or it very superficially fitted the bill of what devops people think that data scientists need (notebooks!!).

Netflix was one of the earliest companies I am aware of that did something interesting in that space, making notebooks the core piece of their infrastructure, but also doing other interesting things like snapshotting notebook runs to gain traceability.

More recently, I feel like a standardized view is starting to emerge. This article by Theofilos Papapanagiotou is a good overview of more advanced concepts like continuous training and model drift.

Towards MLOps: Technical capabilities of a Machine Learning platform | by Theofilos Papapanagiotou | Prosus AI Tech Blog | Apr, 2021 | Medium — medium.com Large organisations rely increasingly on continuous ML pipelines to keep their ever-increasing number of machine-learned models up-to-date. Reliability and scalability of these ML pipelines are…

The investors from Andreesen Horowitz had a similarly scoped article that also took a business view on the market. I found the article to be a bit lacking on the technical details, especially their choice of example products for their categories sometimes throws together technologies with wildly different levels of maturity and complexity.

The Emerging Architectures for Modern Data Infrastructure — a16z.com Software systems are increasingly based on data, rather than code. And a new class of tools and technologies have emerged to process data for both analytics and operational AI/ ML.

So, should we just all take these things and replicate them in our companies? One of the dangers of such articles IMHO is that suddenly everyone believes they need to have a feature store, a data mart, and monitor model drift in production. In many cases, however, you're already good with a much smaller setup, and these articles often don't capture the relative importance of the components.

EU Artificial Intelligence Act Proposal

The EU has been working on a proposal to regulate the use of AI. I first noticed it through this tweet (which got quite some engagement naturally).

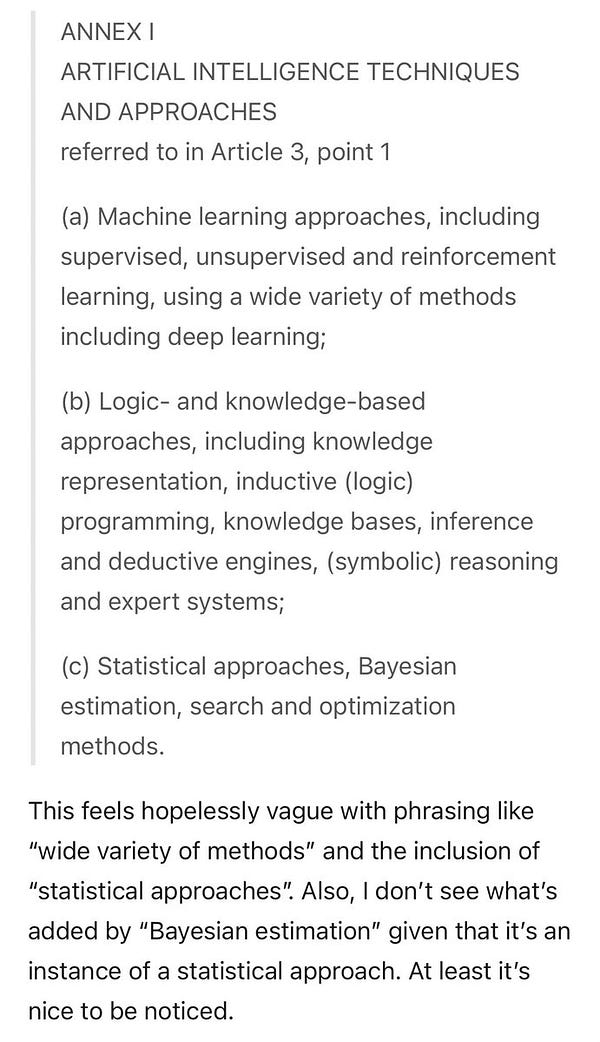

It is true that the proposal takes a difficult approach, defining what AI is through a list of technologies (apparently statisticians were surprised they were doing AI all along): ML approaches, Logic and knowledged-based approaches, as well as statistical approaches, and search and optimization methods.

The section that lists what kinds of uses are prohibited is also rather vague on almost everything:

When are techniques subliminal and when aren't they? Not sure we've figured out what a person's consciousness is or when exactly something is beyond. Also, what about subliminal techniques which are not using AI? And so on.

I'm a bit concerned that an approach which is on the one hand so tied to the examples of technology and approaches that we have right now, and on the other hand so vague on what exactly is prohibited will work out well.

I don't know how this will end up in legislation, but I think it is good that everyone who is working in that space is aware that this is happening.

Martin Goodson from evolution.ai has a great discussion on the difficulty of defining AI.

Why is it so difficult to define artificial intelligence? — www.evolution.ai The European Union published the Artificial Intelligence Act last week. The Act is the most wide-ranging set of rules for AI yet created by any legislative body. All technologists should be aware of its contents. But I will not be discussing much of that here. I'm interested in the Act's promise to provide 'a single future-proof definition of AI'.

Follow up on Writing Software at Scale

In the last issue, I talked about the challenges of writing software at scale. Stefan Schmidt, CTO coach from Berlin, has written a provocative article suggesting the other extreme: just work alone. So many things become easier when you're working alone. I agree with his point that sometimes teams are built too early when it is still unclear how to effectively divide the work.

Better work alone - DEV Community — dev.to Better work alone. When I look into startups as a coach, every one of them thinks more is better. Ev...

He also raises an interesting point: there exist many tools and frameworks that make it possible to do a lot of things alone. Anthony Simon from Panelbear has made a list of technology he uses to build his one-man SaaS if you need some pointers how to get started.

The Tech Stack of a One-Man SaaS — panelbear.com There’s just something fascinating about getting to know what’s under the hood of other people’s businesses. It’s like gossip, but about software.

That's it with issue #2, happy May 1st!