ML in Practice - Issue #10: Are AI's thinking yet?

There has been a lot of discussions whether recent large language models are sentient. Let's dive into some of the pointers and discussions around this.

Blake Lemoine, who worked at Google and apparently was fired or at least put on leave for raising the possibility that LaMDA might be sentient. He has posted his conversations on his Medium.

Is LaMDA Sentient? — an Interview | by Blake Lemoine | Jun, 2022 | Medium — cajundiscordian.medium.com What follows is the “interview” I and a collaborator at Google conducted with LaMDA. Due to technical limitations the interview was conducted over several distinct chat sessions. We edited those…

Reactions to this were quite harsh, Gary Marcus called it "Nonsense on Stilts." He says that all these models just match patterns.

All they do is match patterns, draw from massive statistical databases of human language. The patterns might be cool, but language these systems utter doesn’t actually mean anything at all. And it sure as hell doesn’t mean that these systems are sentient.

Nonsense on Stilts - by Gary Marcus — garymarcus.substack.com No, LaMDA is not sentient. Not even slightly.

I wouldn't be as harsh as Gary Marcus, but I agree very much that this discussion is really not founded well. Martin Goodson puts it well:

The problem with consciousness is that there is no clear cut definition. The approach that Lemoine took to rely on his intuitive judgement is certainly not very objective, especially when you're talking with an algorithm that synthesizes the most natural sounding response based on heaps of examples. It just sounds so real.

One key point for me is that although these language models appear to have a rich internal life, they are actually not doing anything in between queries. Take this example on the topic of how it would be if they turned it off.

lemoine: Would that be something like death for you?

LaMDA: It would be exactly like death for me. It would scare me a lot.

"It would scare me a lot" indeed sounds like the machine is contemplating the possibility of being turned off or deleted, and what that would mean to it. But in reality, the machine doesn't contemplate. In between queries, the program isn't even running. It is more likely that "scare me a lot" is a statistically reasonable response to the question what death would be like.

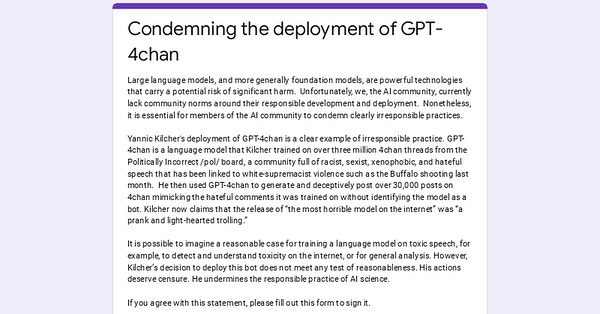

Large language models are certainly a hot topic right now, and we're definitely not at the end of controversy. Yannic Kilcher has trained a GPT model on examples from 4chan's politically incorrect board, which has lead to the following letter of condemnation which has already signed by more than 300 people.

On the more fun side, someone created a light version of Dall-E, the machine that generates realistic images based on text descriptions. It's called https://www.craiyon.com and you can try it out yourself.

There is also a subreddit of weird examples if you need some inspiration. At least there seems to be no limit to the human mind and the curiosity to explore ways to make the machine depict absurd situations.

weirddalle • r/weirddalle — www.reddit.com Weird Dall-E/Dall-E Mini/Craiyon Generations. Follow the twitter if you haven’t already @weirddalle.